“If you feel queasy, I can turn this off,” offers Peter Der Manuelian. At the flight controls of a small aircraft, the King professor of Egyptology is following a line of tall palm trees along a causeway that stretches across the Egyptian desert. We’re moving fast and low, vertiginously, just above the treetops. As Manuelian swoops in close to one particularly large palm, his passengers instinctively lift their legs to avoid a collision.

But there is no real danger. The passengers are students seated in a visualization center (built for earthquake simulations and the study of geology, but generously loaned by Dudley professor of structural and economic geology John H. Shaw) in front of a 23-foot-wide, cylindrical wraparound screen on which Manuelian is projecting a virtual world in 3-D. He is taking them on a field trip to the Giza Plateau as it appeared 4,500 years ago, when the pharaoh Khufu died around 2566 B.C.

Manuelian steers over to watch Khufu’s funeral ceremony in progress. The pharaoh’s mummified body lies in a coffin, surrounded by priests wearing leopard skins and mourners chanting spells. These are avatars: some even have faces re-created from statues of Egyptian officials of that era. Manuelian, prompted by a student question, next plunges down a shaft to a plundered burial chamber that no one has seen in 106 years, since George Reisner, Harvard’s first professor of Egyptology, took the detailed photographs that made this re-creation possible. Wood from the damaged coffin and bones litter the floor. The class visits the harbor beside the plateau, then the quarry where limestone blocks are being cut, and views from a distance the great stone outcrop that will eventually become the Sphinx.

What Manuelian has created is a visualization—a teaching and research tool more powerful than a video, which is linear. “When I am asked a question about something at the site, we can navigate over in real time to look at it,” he says. “And we can experience the site at various times: when the pyramid is half built, or three-quarters built, or completed.” Building a virtual world (as he and his team have done in partnership with Dassault Systèmes, Paris) enhances research, too, he adds: it underscores what isn’t known. “The process raises all sorts of research questions: Was the mummy embalmed in the temple or in some kind of purification tent somewhere else? Should this canopy be in the middle of the courtyard? How many statues were set up in the niches?” Nevertheless, everything from the relationships among buildings to the heights of walls and the locations of statues and funerary objects is based on the best possible archaeological evidence: art objects, glass-plate expedition photo negatives, archaeological drawings, notes, and diaries assembled by Reisner and the Harvard University-Boston Museum of Fine Arts Expedition between 1905 and 1947, during their carefully documented excavations at Giza. Manuelian, who with funding from the Mellon Foundation oversaw the transformation of Reisner’s detailed records into a browsable public website (www.gizapyramids.org), has made possible the creation of this immersive, long-ago world.

Like pyramid-building itself, the work of the humanities is to create the vessels that store our culture. In this sense, the digitization of archives and collections holds the promise of a grand conclusion: nothing less than the unification of the human cultural record online, representing, in theory, an unprecedented democratization of access to human knowledge. Equally profound is the way that technology could change the way knowledge is created in the humanities. These fields, encompassing the study of languages, literature, history, jurisprudence, philosophy, archaeology, religion, ethics, the arts, and arguably the social sciences, are entering an experimental period of inventiveness and imagination that involves the creation of new kinds of vessels—be they databases, books, exhibits, or works of art—to gather, store, interpret, and transmit culture. Pioneering scholars are engaged in knowledge design and new modes of research and expression, as well as fresh reflection and innovation in more traditional modes of scholarly communication: for example, works in print that are in dialogue with online resources.

One way that scholars have sought to come to grips with digitization in the humanities, says Peter K. Bol, Carswell professor of East Asian languages and civilizations, is through the lens of information management, by understanding its impact on the four phases of a typical research cycle: finding, organizing, analyzing, and disseminating information. Scholars traditionally begin projects by figuring out what the good research questions are in a given field, and connecting with others interested in the same topics; they then gather and organize data; then analyze it; and finally, disseminate their findings through teaching or publication.

Scholarship in a digital environment raises questions about every aspect of this process. For example, in gathering and organizing data, “Faculty and students are creating digital collections, some of which turn out to be extremely valuable, and that don’t exist anywhere else,” Bol points out. The publication of a book about wild urban plants (see “Off the Shelf”) might first involve the assembly of a database of plants (it did). In some contexts, that database—because it allows information to be understood in relationship to other kinds of information—may be more valuable than the book.

This raises the question: who will archive these databases? As Bol puts it, “Where do libraries fit within the information-management equation?” That is one kind of question the Harvard Library is busy reimagining and repositioning itself to address, and a question the University’s central administration is confronting by assembling an ecosystem of resources, services, and support to aid professors in their teaching and research. But digitization of archives also has the capacity to change the traditional division of labor in humanities scholarship in fundamental ways—for example, by empowering ordinary people to participate in the creation, curation, and interpretation of collections.

Proletarian Voices

Fast forward to January 25, 2011. Just a few miles from the pyramids, a revolution has begun. Protestors jam the streets of Cairo seeking the ouster of Egyptian president Hosni Mubarak. And on the West Coast of the United States, Todd Presner, a professor of Germanic languages, comparative literature, and Jewish studies at UCLA, quickly sets up a means to capture this history as it unfolds. As part of a project called Hypercities, he and his collaborators record voices from Cairo through social media in real time—Twitter feeds from individual eyewitnesses on the ground: “Gun shots heard in our street in Mohandeseen [an upscale neighborhood in Giza] and the army is bringing in tanks.” Then, “Tanks on the streets in Cairo.”

The tweets, their rough locations pulsing on an accompanying map of the city, allow a user to go back in time and experience this event moment by moment, or to search for keywords. They record everything from concern over what could happen when soldiers encounter demonstrators to the voices of protest organizers: “Tomorrow we meet 9 a.m. in Tahrir [Square]. We will march on Mubarak’s presidential palace in Heliopolis. Down with the dictator.” Eventually, the tweets make clear that the army will not interfere with the populist uprising: “Protesters are writing ‘Down with Mubarak’ on all the army tanks near Tahrir Square. Soldiers love it!”; and “Saw one pro-Mubarak supporter who was caught with a gun, arrested by protesters. He sat by army tanks, in tears.”

A few weeks later, Presner demonstrated Hypercities Cairo—this continuing citizen-archive of a revolution in progress now called Hypercities Egypt—at a symposium at Harvard. He was in Cambridge with other friends and colleagues of Jeffrey Schnapp, a catalyzing force in the digital humanities who joined the Harvard faculty in 2011, serving as a faculty co-director of the Berkman Center for Internet and Society, and directing the metaLAB (at) Harvard, a research and teaching collaborative that charts “innovative scenarios for the future of knowledge creation and dissemination in the arts and humanities.” Also presenting that day was metaLAB co-founder Jesse Shapins, a lecturer in architecture who was one of three Harvard-affiliated designers of a software program called Zeega. (Originally conceived to create database documentaries, Zeega has become a nonprofit creative technology company.) Zeega software allows the integration of media of all kinds (video, photographs, audio, text) from multiple sources into a single, cohesive interface: a multiformat montage. Audience members found the transformational possibilities of these technologies for scholarship tantalizing.

Crowdsourcing a Crisis

Capturing the tweets of ordinary people living through extraordinary moments is one approach to assembling a raw record of history. But what would happen to the traditional scholarly research cycle if the experiences of those ordinary people could be leveraged to become active “participants” in gathering and even interpreting archival material? A group of researchers at the Reischauer Institute of Japanese Studies (RIJS) is about to find out.

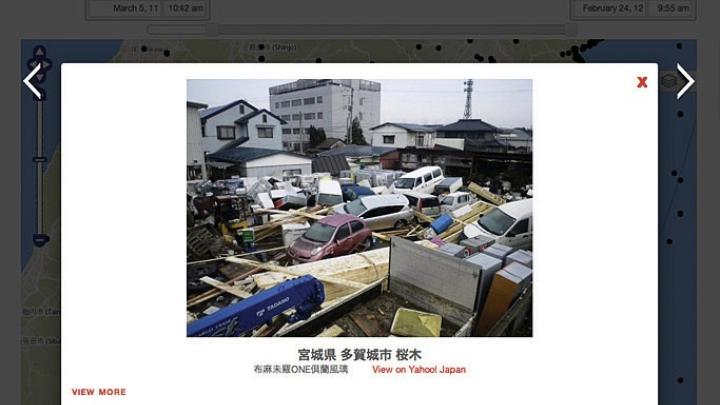

When the largest tsunami in Japan’s recorded history struck in March 2011, wreaking horrific damage up and down the northeastern coast, Folger Fund professor of history Andrew Gordon recalls that he and other Harvard scholars of Japanese culture “had a sense that this was an event that would probably change people’s sense of time” in Japan. “The immediate devastation was extraordinary, and compounded by the ensuing nuclear accident. The prime minister called it ‘Japan’s greatest crisis since World War II,’” notes Gordon, the director of RIJS. Faculty members and students alike organized fundraisers, sent volunteers to help on the ground (with the assistance of alumni members of the Harvard Club of Japan), and convened events to understand the catastrophe.

But a core group of faculty members also felt obligated to try to capture the ephemeral documentation of the crisis that was appearing on the Internet. The Reischauer Institute had experience with web-archiving; a decade earlier it had begun collecting online materials relating to potential revisions to Japan’s constitution. But Gordon soon realized the 2011 crisis was generating two orders of magnitude more archival material than that earlier project. The sheer volume of information, coming from 10,000 websites, was too great—and diverse in format—for Gordon’s group to capture by themselves.

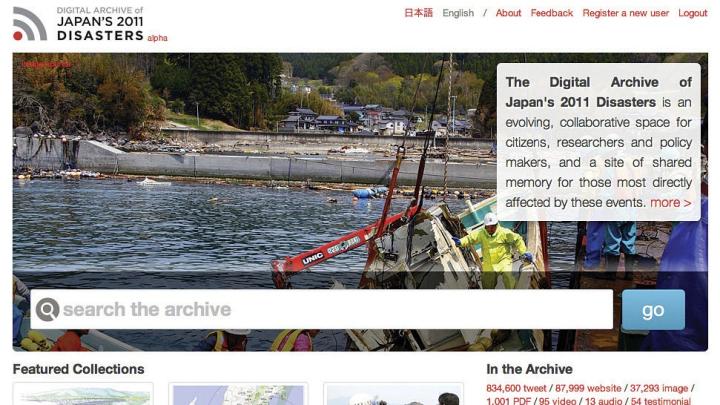

Fortunately they were not alone. Other groups and institutions were also collecting data: the Internet Archive (a major American institution devoted to preserving the record of the Internet for posterity), Tohoku University in Sendai, Yahoo Japan, All311 Archive (a newly created nonprofit Japanese organization), Japan’s National Diet Library, and many others. Much of this material was collected without being catalogued: saved, but not searchable. Metadata—the kind of information professional librarians assign to books as a finding aid—was not always included or in some cases was quite limited. As the RIJS team forged partnerships with other institutions, and the idea of creating a networked archive took shape, they created a common form for the submission of such data with help from the Harvard Library. Harvard now hosts a significant portion of the metadata provided by its partners—the glue that holds this network of archives together—which it has indexed, made searchable, and now supplies freely to all the project partners. Most of the raw material in the archive is stored elsewhere.

How to make the archive useful was another question. The materials were not just blogs and listservs, but also government documents, YouTube videos, sound recordings, photo collections, personal testimonials, maps, and social media. Gordon asked Konrad Lawson, a technologically savvy doctoral candidate with a deep knowledge of Japan’s history, language, and society, to manage Harvard’s role in the complex process of building a networked archive. He also contracted with metaLAB—a place engaged in critical discourse and reflection about the nature of archives in a digital age, among other topics—to help them think about how to develop a networked and dynamic interface. And metaLAB, in turn, partnered with Zeega to design the interface.

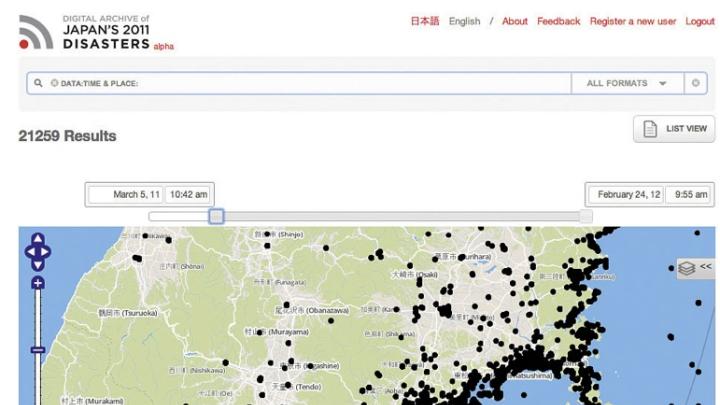

The software that runs the resulting Digital Archive of Japan’s 2011 Disasters (a test version is available for use now at www.jdarchive.org) is a customized deployment of the Zeega platform. It includes maps with layered geodata from Harvard’s Center for Geographic Analysis (directed by Bol), Twitter feeds and geographic information system (GIS) data generously provided by the Hypercities Project, and 50,000 photos from Yahoo Japan; first-person accounts of the events; and thousands of official documents. Much additional content will be integrated into the archive as various partners make it available in coming months. The software searches this vast array of material by making API calls—requests—to partner archives. (Application programming interfaces allow projects and databases to talk to each other in a common programming language). These exchanges, Lawson explains, create solid and bidirectional relationships between archives. Photo sharing and social media companies use public APIs “to embed Twitter feeds into blogs or connect apps with Facebook,” he continues. “This is now leaking into the world of archives and the academic world in a big way, and institutions like metaLAB and some of the leading technology companies in Japan” are making this type of interface available and useful for researchers.

Simply as a search tool, the project will have a “much better signal to noise ratio” than Google would for this subject, says Gordon. But a truly distinctive feature is that the archive is interactive: users will be able to add tags (descriptions and keywords) to the material without changing the original, which might be stored on another continent. Those new descriptive entries then become part of an object’s metadata. With crowdsourced information like this, “There is a risk that somebody might tag something incorrectly, so it is like Wikipedia in that sense,” Gordon acknowledges, “but we think the benefits far outweigh the risks.” Users will also be able to enrich the data further by creating, and saving, specialized collections of material, so that other users interested in the same topics—the impact of the disaster on the fishing industry, for example—can access them.

The software allows users to “author multimedia work” explains Jesse Shapins. “Where Zeega offers something unique is in making archives and libraries living, creative places. They become places where information is not only accessed, but where information and knowledge are used and created.”

There is also a social element. “In one way it’s a collection of items,” says Kyle Parry, metaLAB’s liaison to the Digital Archive, “but as you move through it, you’re not just encountering items—websites and documents and media—you’re also encountering fellow archivists through user-generated elements like annotations and curated collections. So in a way, you’re becoming part of a community of archivists whom you may never meet in person, but with whom you’re collaborating.” Adds Shapins, “The Digital Archive of Japan is a genre—an example of a participatory archive.”

That is true in the deepest sense, because in the archive they are building, whenever any participant “touches” an item, “it’s going to ripple, if you will, in the system as a whole,” explains Parry. “When a person adds something to a collection—a tag, say, or geographic information—or even when an object gets visited a lot, touching it will impact the value of that object. All these interactions will increase the chances that it’ll get discovered by somebody else.” Such interactions will thus change the way the archive functions by literally changing where an object is filed in the archive. “It becomes an organic thing,” he says. Konrad Lawson jokes, “It will be like a Heisenberg archive. You won’t be able to be a part of it without affecting it.”

These and other features of the Digital Archive of Japan’s 2011 Disasters are still being developed, but its creators are already thinking about it as a possible model for crisis-archiving of future momentous events. Such archives might even play a role in the formation of cultural memory, Shapins believes. Already, digital media and networked communication have changed the way crises unfold in a community. “When you have real-time communication connecting people, it changes their response to an event and how they experience it,” he says. “It also changes the nature of an archive” by providing an immediacy that didn’t exist before.

“Looking back on an event 30 years from now really matters,” he continues. But there’s also an important process of working through the trauma “in the days, months, and years following an event, for a society locally as well as globally.” Thinking through that aspect of an archive “has been a major motivation” in this project, Shapins says. “Storytelling is…a vital part of…understanding this event. There have been a lot of groups in Japan leading the way, going out with recorders, giving people cameras. For us, to be a part of trying to help provide a common interface that allows for that material to be found, to be shared, and those stories to be known, is sort of the mission for the project.”

While nonprofit Zeega is focused on developing a platform, what metaLAB provides, says Shapins, is “distance from our hands-on work” that can lead to new forms of thinking—whether about the nature of archives, of books, or of software (see “An Interpretive Artist of Urban Space”). It’s “a place for research and critical discourse,” he says: “That reflective dimension is where the humanities bring so much value.”

Mining a Million Texts

Just as empowering crowds in the stewardship of archives has the potential to change the way scholars assemble certain kinds of information, so digital methods of scholarly inquiry could expand both the temporal and intellectual scope of research and publication in a field. Historian Jo Guldi, a junior fellow of the Society of Fellows, has been reflecting on the impact of communication revolutions for some time, and using digital tools such as text-mining and visualization in her research. Her first book, Roads to Power: Britain Invents the Infrastructure State, considers how communication revolutions (roads in nineteenth-century Britain, but also, by analogy, digital pathways) can have the unexpected consequence of further widening the divide between rich and poor.

At the University of Chicago, she taught a graduate course in the digital humanities, one of the first of its kind in the United States; the class worked with visual information designers at Google and the IBM visualization lab. “These designers want to show change over time by identifying important events in history or in a person’s life” explains Guldi—to figure out, for example, “not only what was the most frequently cited event in a revolution like the Arab Spring uprising, but what was the most important. Which one influenced the others? That,” she says, “is a historian’s question.” Google can’t count tweets or textual references numerically to find the answer, but “maybe there is some other way to show those associations. To write an algorithm that could show such a thing, the designers need the kinds of thinking—how to define an ‘event’ according to Hegel’s philosophy of history, for instance—that humanists are trained in.”

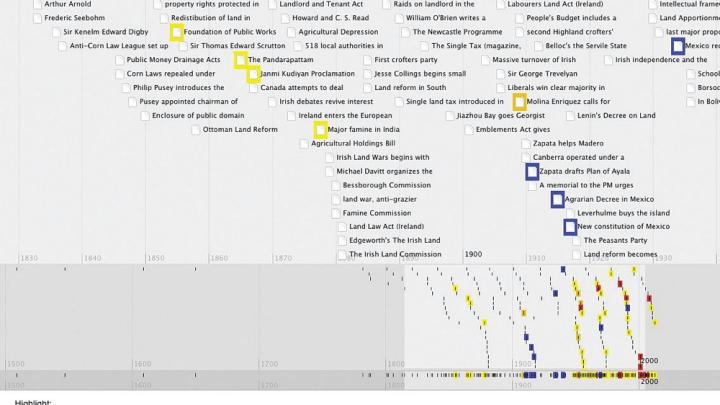

At a recent meeting of the American Historical Association, Guldi proposed that the power of digital tools in research would expand the focus of dissertations from the 20-year span that has been “the hallmark of historical scholarship over the last three decades” to 150 years. She herself is at work on a world history of land reform from 1860 to the present, and sees the ambitious project as a way of proving or disproving—to the extent it is successful—the power of digital methods of scholarly research. She displays on her computer a visualization that demonstrates a cultural “turn”—a shift in a corpus of texts about land reform—by mapping textual references to particular subjects through time. Color-coding these references by country and region, she can show how land reform began in developed countries and then spread, eventually reaching Africa and Latin America. The product is evidence of a kind (it could become a figure in a book), but she is using it instead as a tool to guide her research.

“There has never been a world history of land reform, and it happened everywhere in the nineteenth and twentieth centuries,” she says. The question of who controls land and who is being evicted is tied to events like cultural revolutions, she explains: “It happens in every Latin American country in the 1960s and ’70s, often with U.S. involvement.”

Looking at the visualization, Guldi can “see the moment when suddenly Africa and Latin America enter the conversation….The moment is 1950. And then the reason becomes clear: the founding of the Food and Agriculture Organization of the United Nations, which becomes an arm of U.S. foreign policy and embraces land reform on the British model as a means for creating a peaceful path to prosperity.” The visualization tells her where she needs to read more about this key moment in the history of her subject.

Guldi makes use as well of geoparsing, a kind of text mining that grabs place names—from antebellum American novels, for example—and, using a gazetteer, sites them on a map to provide a sense of landscape as it existed in the American imagination at that time. “Then you can ask all sorts of comparative questions,” she says. Did the concept change after the Civil War? Did it change differently in the South than in the North? But Guldi also cautions that, like the digital timeline, the changing shape of the American imagination in novels is “merely suggestive”: it gives scholars “more justification for choosing certain places” to do deeper reading.

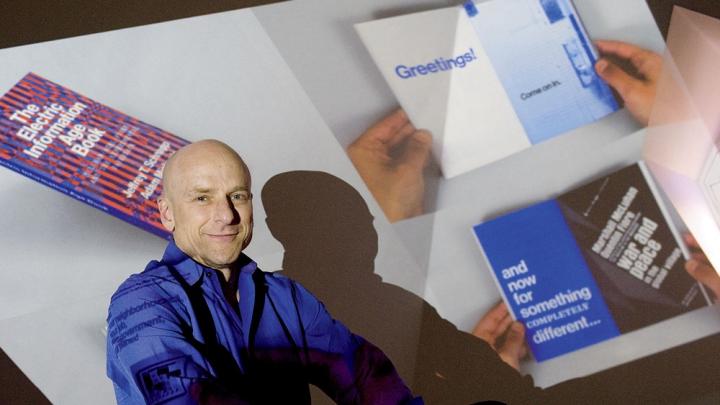

The ability to analyze a vast body of texts also implies a dramatic expansion of the field of questions humanities scholars can ask. Professor of Romance languages and literatures and comparative literature Jeffrey Schnapp, the faculty director of metaLAB, says, “Most literary historians work on a small corpus of texts where their expertise is manifest through the finesse with which they can demonstrate certain features of that corpus. Those noble skill sets are not about to disappear with a wave of the digital magic wand. On the other hand,” he explains, “there are really exciting research questions on the scale of, ‘How does the socioeconomic history of publishing as an industry relate to the production of certain literary genres?’ And when you start to operate on that scale, of course your data set has suddenly expanded: no human being can possibly read the one million books on the shelf that might document that history.” The use of computational and statistical methods becomes mandatory. “Where does that put us?” he asks. “Well, it puts us at a place where the boundary line between what we have traditionally called the humanities and what we have traditionally called the social sciences becomes awfully porous. For me that’s an expansion and enhancement of the humanities of the most creative and best sort.”

Rewriting the Rules

There are highly significant changes afoot in the humanities, but the idea that there is a revolution underway is “sometimes overstated,” Schnapp says. “I have been involved in experiments with this sort of work going back to the early ’80s, but I would argue that the true transformational moments still lie in the future. The reality is that game-changing research, solutions to the richest, most challenging disciplinary questions, and major breakthroughs develop as a result of deep and long traditions of inquiry. Tools and technologies may vastly expand the compass of research and alter the basic conditions of knowledge production. But, in and of themselves, they don’t pose or answer interesting research questions. That’s what people do.”

Many areas of the humanities have always been engaged with fundamental issues that have reemerged as central to the digital humanities, he says, such as “the relationship between text and image, the analysis of cultural networks, or the very multiplicity of print itself as an instrument for communicating and conveying knowledge, everything from typography and book design to systems of distribution.”

Schnapp is in a profound sense a designer himself: of exhibitions, of books, of communities, of interfaces, and of appliances for teaching and learning. “Design means everything from typography to design in the abstract, the cognitive sense of how you conceptualize something, thinking about the ways in which art, or sound, or tactile environments operate separately or together,” he says. “What can you do on a screen that you can’t do in a physical environment and vice versa?” And it means thinking also about the traditional publishing model—where research ends with a stable artifact, like a book—versus one that is iterative, is disseminated in multiple forms, and generates continual feedback, unifying the linear stages of the traditional research cycle into one ongoing parallel process. For Schnapp, devising new models of scholarly publishing that enhance academic study is a design question—an urgent one. As he puts it, “When you move from a universe where the rules with respect to a scholarly essay or monograph have been fully codified, to a universe of experimentation in which the rules have yet to be written, characterized by shifting toolkits and skillsets, in which genres of scholarship are undergoing constant redefinition, you become by necessity a knowledge designer.”

Schnapp remains passionate about print. One of his goals is to figure out ways to “reimagine print culture,” and so metaLAB is experimenting with new publishing models, print as well as digital. In 2013, the lab will publish a series with Harvard University Press (then celebrating its hundredth anniversary) called metaLAB Projects, dedicated to exploring what a scholarly book might look like in an era where knowledge is being produced in digital forms from the outset. Schnapp is writing about “the library after the book,” for example (a deliberately provocative title); Manuelian is writing about the translation of archaeological knowledge into simulations.

Among the experimental models of “print-plus” publishing Schnapp is exploring is the creation of augmented online editions of printed books—adding audio, for instance, so students can “hear the ferocity of a debate on a contested question”—or engaging a community of contemporary scholars to “remix” traditional scholarly books. “For instance, I started out as a Dante scholar,” he says, “and once worked principally with fourteenth-century Italian literary culture. What if I could use my expert knowledge to remix one of the most important critical works in my field that was written 50 years ago…adding layers of multimedia commentary that brought it into the present? A lot has happened in those 50 years.”

Communities of scholars have always been integral to the history of research, he continues, “but it’s typically been difficult to perceive this collective dimension. We can now imagine vastly expanded forms of virtual assembly and collaboration on the Web.” Exactly how they might give rise to breakthroughs in scholarship remains unclear, “but one of the missions of metaLAB is to serve as a place where experimentation of this kind occurs at a high level, and as an incubator for innovative models that can establish new standards of excellence.”

•••

The changes afoot in the humanities are about expanding the compass, the quality, and the reach of scholarship, Schnapp maintains. “Which means, first of all, not a monolithic model of knowledge production. There is no such thing as ‘The Digital Humanities’; there are multiple emerging domains of experimental practice that fall under this capacious umbrella. Second,” he continues, “some of these domains of practice imply novel sorts of research questions and results; but others involve reviving forms of scholarship—like critical editions and commentaries—that were killed off by market constraints within university publishing. A lot of spaces that have been closed down are being reopened, thanks to the digital turn. And third, research tools and methodologies necessarily evolve and the humanities are no exception.

“Our ability to access and analyze information has created possibilities unimaginable only a few generations ago,” he says. “I think the quality of scholarship that can be produced, working with vastly expanded cultural corpora, and speaking in contemporary language to expanded audiences, represents one of the great promises of our era. So for me, this is a uniquely exciting moment for the humanities, comparable to the Copernican revolution or the discovery of the New World.”

,

,

,

,